We are going to create a Spring Boot application with Spring Web and Spring for Apache Kafka dependencies and use Spring Initializr to generate our project quickly. If the Kafka server runs without any error as well, we are ready to create a Spring Boot project. Windows users should again use bin\windows\ directory to run the server. bin/kafka-server-start.sh config/server.properties. Simply open a new tab on your command-line interpreter and run the following command to start the Kafka server. If the ZooKeeper instance runs without any error, it is time to start the Kafka server. Since Kafka console scripts are different for Unix-based and Windows platforms, on Windows platforms use bin\windows\instead of bin, and change the script extension to. bin/zookeeper-server-start.sh config/zookeeper.properties Once you are in the directory of the Kafka folder, kafka_2.12-2.5.0, run the following command to start a single-node ZooKeeper instance. We will use the convenience script packaged as a ZooKeeper server that comes with the Kafka. The good news is that you do not need to download it separately (but you can do it if you want to). % tar -xzf kafka_2.12-2.5.0.tgzĪs I ve already mentioned, the Kafka uses ZooKeeper. Simply open a command-line interpreter such as Terminal or cmd, go to the directory where kafka_2.12-2.5.0.tgz is downloaded and run the following lines one by one without %. That being said, we will need to install both in order to create this project.įirst, we need to download the source folder of Kafka from here.įirst, download the source folder here. Kafka uses ZooKeeper, an open-source technology that maintains configuration information and provides group services.

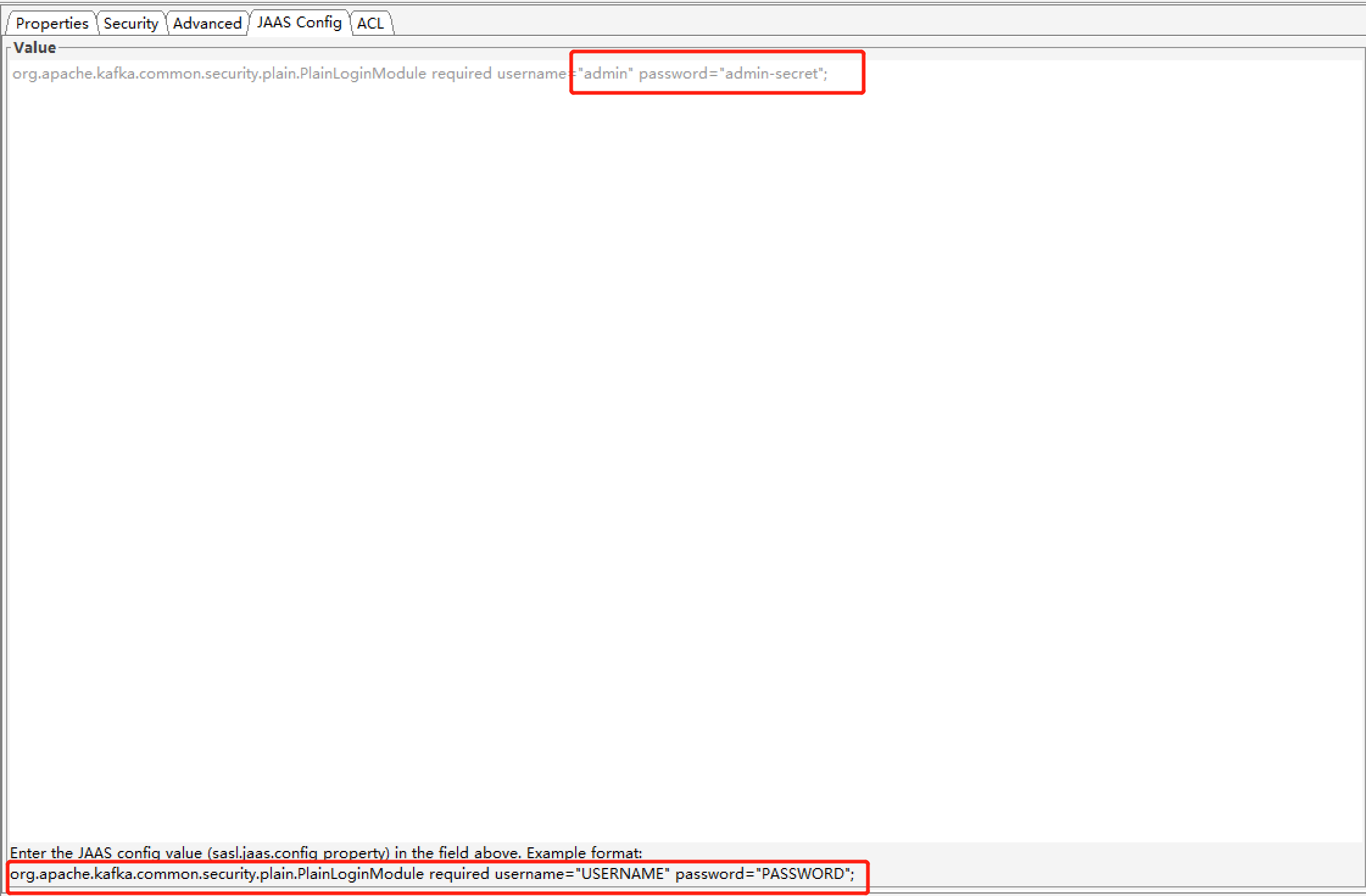

Today, we will create a Kafka project to publish messages and fetch them in real-time in Spring Boot. Good to know: ZooKeeper is required for running the Kafka right now, but it will be replaced with a Self-Managed Metadata Quorum in the future. ZooKeeper tracks the status of cluster nodes, topics, partitions, etc.If there is more than one broker, it is called Cluster. Broker manages the storage of messages in the topics.Consumer subscribes to topics, reads, and processes messages from the topics.Producer publishes messages to a topic or topics.Topic contains records or a collection of messages.Its unique design allows us to send and listen to messages in real-time.Īpache Kafka uses 5 components to process messages: What is Apache Kafka exactly? It is a powerful publish-subscribe messaging system that not only ensures speed, scalability, and durability but also stores and processes streams of records. Hope this saves someone time in the future.Apache Kafka is a genuinely likable name in the software industry decision-makers in large organizations appreciate how easy handling big data becomes, while developers love it for its operational simplicity. =. required username="KEY" password="PASSWORD" In our case, the template for this properties file is: =https properties file and pass it into each call to the binary via the -command-config option. : Java heap spaceĪt .(HeapByteBuffer.java:57)Īt (ByteBuffer.java:335)Īt .memory.MemoryPool$1.tryAllocate(MemoryPool.java:30)Īt .(NetworkReceive.java:112)Īt .(KafkaChannel.java:436)Īt .(KafkaChannel.java:397)Īt .(Selector.java:653)Īt .(Selector.java:574)Īt .(Selector.java:485)Īt .NetworkClient.poll(NetworkClient.java:539)Īt .admin.KafkaAdminClient$n(KafkaAdminClient.java:1152)Ĭounterintuitively, you are not running out of memory, but rather this arises as a result of not providing SSL credentials. In case you are getting the following OOM error when running kafka-consumer-groups: ERROR Uncaught exception in thread 'kafka-admin-client-thread | adminclient-1': (.utils.KafkaThread) Just leaving a note here to save everyone using a Kafka cluster protected by SSL time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed